Oh man. Agents, OpenClaw, and my goodness what is going on?

If you haven’t read this yet, please do. (Thanks Allan Drummond for bringing this to my attention!). TLDR for this post: serious academics (like Scott Dodelson in the hyperlink above) have now been converted—the AI era is officially here. So now what do we do?

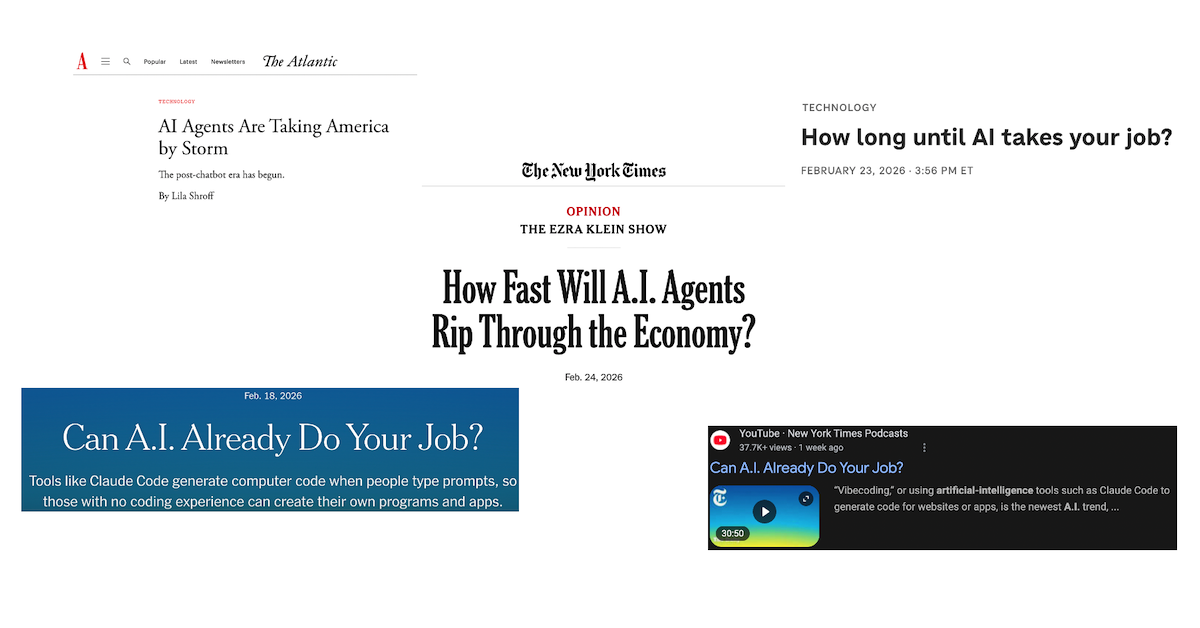

About a year ago, when people were talking about agentic AI (or AGI), nearly every person I knew within academia rolled their eyes, laughed, inevitably thought that our tech overlords (as they are colloquially known) were full of it. Now, to be fair, they still could have been full of it. But, some version of what they had been talking about is now real. Agents have come and they are doing seemingly incredible things. This post will not be about these things; you can find this kind of content everywhere online. This post will also not be about technically what these agents are doing in various fields (like this review can provide information about in the field of biomedicine and drug discovery). But rather, provides the viewpoint of someone who was incredibly skeptical as of last year, and is now seriously considering what the role of education, training, and life after agents means. I also will not pretend to remotely have the answers to such questions; but just to bring to attention to the few people who read this blog—we should really think hard about what our existence means given what is actively happening right now in the AI sphere.

What feels different this time is not that AI can write better paragraphs or solve coding puzzles faster than a graduate student (it can do both…). It is that the boundary of what we thought required human apprenticeship is dissolving. For decades, we trained people under the assumption that expertise required long immersion: years of reading, slow pattern recognition that accumulated of years to decades of education, trial-and-error intuition building. And that was true. It was true because the central bottleneck was cognitive bandwidth. The scarce resource was the ability to search, synthesize, and generate structured thought.

Agents change this entirely.

If an agent can ingest literature and data in seconds, generate plausible hypotheses, sketch experimental designs, critique itself, and iterate; now the scarcity shifts. The cost of exploration collapses rapidly, as does the cost of drafting and producing content. The cost of initial synthesis is remarkably cheap. What does not collapse is judgment. And this is a problem. Why? Because our institutions—universities, companies, etc—are not organized around judgment generally. They are primarily organized around output. Publications, grant submissions, incremental extensions of existing lines of work (a common criticism of the NIH; the entire granting agency that fuels biomedicine). What happens when throughput becomes cheap?

There is a version of this future where proposal volume explodes because writing is free. (This is already happening…NIH has already said there is a limit to the number of R01s that can be submitted because of AI-generated grants). When incremental science floods journals because ideation is cheap. Where the signal-to-noise ratio substantially worsens before it improves. Where the ability to generate is really no longer impressive.

And then there is another version, where the bottleneck moves upward. If agents are the line of first-pass reasoning, what exactly are we training trainees for? If simulation and model-space exploration outpace wet lab execution, does the function of a wet lab simply become a validation layer of AI? If compute becomes the dominant form of intellectual leverage, does capital allocation shift from pipettes to GPUs? Do we make centralized labs that PIs can ‘rent’ so that we can embrace being lean? These are not rhetorical questions; they are ones that I have actively started to ask myself just in the past few weeks. Answers to these questions affect how we, as PIs, hire, make decisions, budget, define value. And most fundamentally: they affect which problems are worth pursuing.

Many research programs of labs are optimization problems inside existing paradigms. Improve this drug. Tune this model. Figure out what this gene/protein/bacteria/cell type ‘does’. Map this pathway more precisely. These are all problems that agents will definitively be able to solve. Better than any single scientist. By far. Don’t believe me? Ask the departments who are hiring crystallographers (hint: there are none…because a video game developer solved their field). This might be an overstatement, but only slightly. Because somewhere along the line, the academic-industrial complex realized that solving structure after structure after structure after structure simply for the sake of solving structures at the expense of solving more fundamental, intellectually difficult problems was a good enough way of (i) attracting grant funding, (ii) being seemingly productive, and (iii) writing high impact publications. (See this excellent review by Paul Nurse outlining this sentiment directly.) Now? I’m not sure a single researcher under the age of 40 thinks this is a good idea. The bottom line: search problems are inherently automatable.

So what remains scarce? Framing. Choosing which search space matters. Redefining the representation of systems. Changing the constraints under which optimization occurs and asking questions that thereby restructure the problem rather than solve it more efficiently. I wonder if this is where human comparative advantage migrates. However, this type of problem-solving requires a fundamentally different kind of training.

Physics is typically regarded as one of the hardest subjects to major in amongst undergraduates. The way I have heard it said: it is because you can study for a thousand years and still fail the exam. This is because physics isn’t fundamentally about giant lookup tables (a la Biology), it is about concepts, invariance, and symmetries. It is about abstraction and representation. Embracing this type of training, from the earliest of educational levels, is likely what is needed. And requires an immense amount of courage by both the educator and student to embrace.

Taking this revolution to its logical extension: if AI lowers the cost of doing science, then capital will chase emerging leverage. If we can explore scientific hypothesis space at orders of magnitude lower cost (because of AI, and ultimately because of automated labs coupled with AI), then incrementalism becomes far less defensible. The argument for safe, predictable science weakens and the opportunity cost for not pursuing bold structural question dramatically increases. Agents may therefore not just accelerate science; they may change what responsible science even looks like.

Pretending all of this is just another tool feels remarkably naïve. Tools do not reorganize incentive structures so clearly, linearly, and rapidly. Tools do not massively compress cognitive scarcity. And tools do not make PIs generally stay up thinking about what the future of education looks like. We are watching cognitive leverage directly translate into capital leverage. CapEx turn into OpEx right before our eyes. And if that’s true, then the most important decision we have over the next few years may not be which agent to use, but which problems to commit our limited human judgment toward.

The era of agents likely will not eliminate scientists. But it will expose which parts of science (and medicine also) were just automation waiting to happen, and which parts require something far deeper. And more human. The unsettling question is whether we are willingly able to move where the economics and logic would suggest we go. Or we go there against our will, refusing to see what is in front of us